Last update: Aug 2, 2022

Introduction

Handy simple scripter is a web app scripting tool with motion tracking, all you need is a Chrome web browser. There is a post introducing the software and its features. It does have some bugs, please report them to @handyAlexander ![]()

This is a little guide to how to used it to generate a script with motion tracking. For general scripting tips, see How to get started with scripting.

Loading a video

Go to Handy simple scripter. Here is what you will see:

In the top there are some different icons:

- Components - show or hide different features - the white boxes in the main window. There are some components shown by default, like the video preview saying “No video loaded” and graph component. But the motion trackers for example need to be turned on to be used.

- File - load files or export script

- Settings - different display and other settings

- Help - keyboard shortcuts and a tutorial video introducing the tool

Drag n drop a video file or click the floppy disk icon to load a video.

When it comes to the motion tracking, the tool contains two different motion trackers with different features.

Neural Network Pose Detection

This is a simple to use and pretty limited motion tracker. It is tracking a selected body part (for example a nose or a hand) automatically. The movement is tracked along the Y-axis (up and down) only. It is only tracking one body part at a time so only one person moving.

This tracker can work well for a POV blowjob vid where the nose is visible and the person being blown doesn’t move much (like in the screenshot below).

To activate it, click Components and show Neural Network Pose Detection. Let’s also change the order to 2 so it’s shown right beneath the video:

Load the tracker by clicking *Start pose detector inside the component. Here you can change the confidence score used, decide how often points will be tracked and which body part to track (default is Nose).

You can now navigate the video and see how well the tracker is tracking the body part. Use the slider above the graph or arrow keys to navigate:

The part tracked is marked with a green circle. If the tracker can’t find the body part well, you can try picking another body part (Tracking point) or consider using another vid.

Now let’s track. Navigate to the part of the vid where you want the script to start and click Track. The tracking will now begin and you can see the progress in the video preview. Graphs will be generated in the graph window.

Computer Vision Tracker

This is a more flexible tracker where you decide what to track yourself and you can track two objects moving in any direction.

To activate it, click Components and show Computer Vision Tracker. Let’s also change the order to 2 so it’s shown right beneath the video:

Load the tracker by clicking *Start computer vision tracker inside the component. Here you can change the size of the object tracked and decide how often points will be tracked and if several points should be tracked. If only one point is tracked, there will still be a second point that movement is tracked against. It will always be locked in the bottom center part of the video (the blue box in the video window).

In the video window, two boxes are visible. The red box is the thing being tracked and the blue box the reference point it is tracked against. Click in the video to move the red box. If you uncheck “Use single point” the blue box can also be moved, by right-clicking.

To track, navigate to the part of the vid where you want the script to start and click Start Tracking or press y. The tracking will now begin and graphs will be generated in the graph window. Look at the red and blue boxes to see that the tracker is following the objects you want to track. If the points are lost or drifting, pause, maybe go back and correct. It does take some experimenting and trial and error to find good points to track.

Tweaking the generated graph

After writing this guide, I realized this part is much easier to do in OpenFunScripter (which can be run on all platforms, even Mac OS X). Nowadays, I usually just export the graph, load it in OFS and use its Simplifier feature to reduce the number of points. And then, tweak the points in OFS.

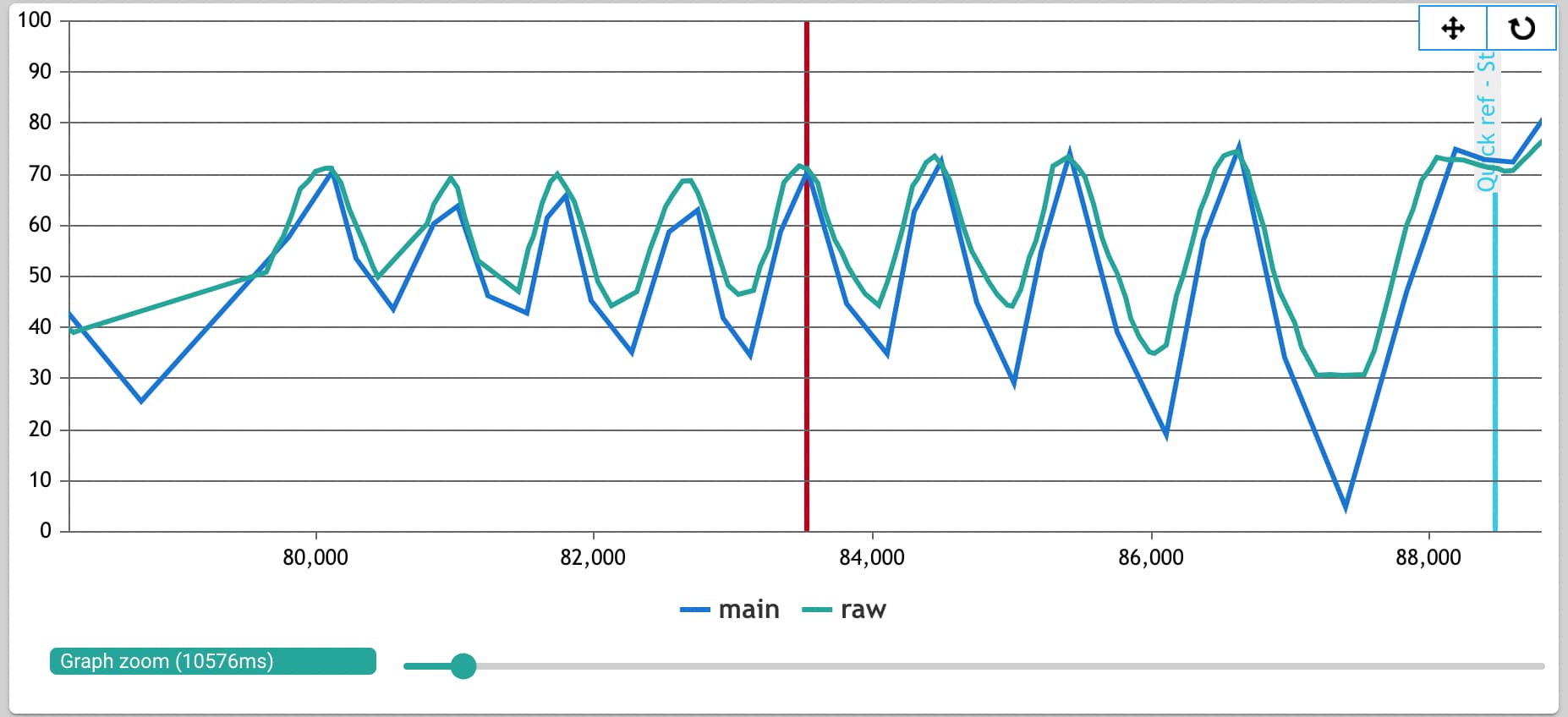

After tracking is done with the entire vid (or you pushed Pause) two graphs will be generated:

raw is the tracked motion. main is a version of raw where mid points are removed and the graph is normalised (max movement is 100% and min movement 0%). Main is usually a better starting point for working on the script but you can use raw and process it yourself using the Process raw data component if you are not pleased.

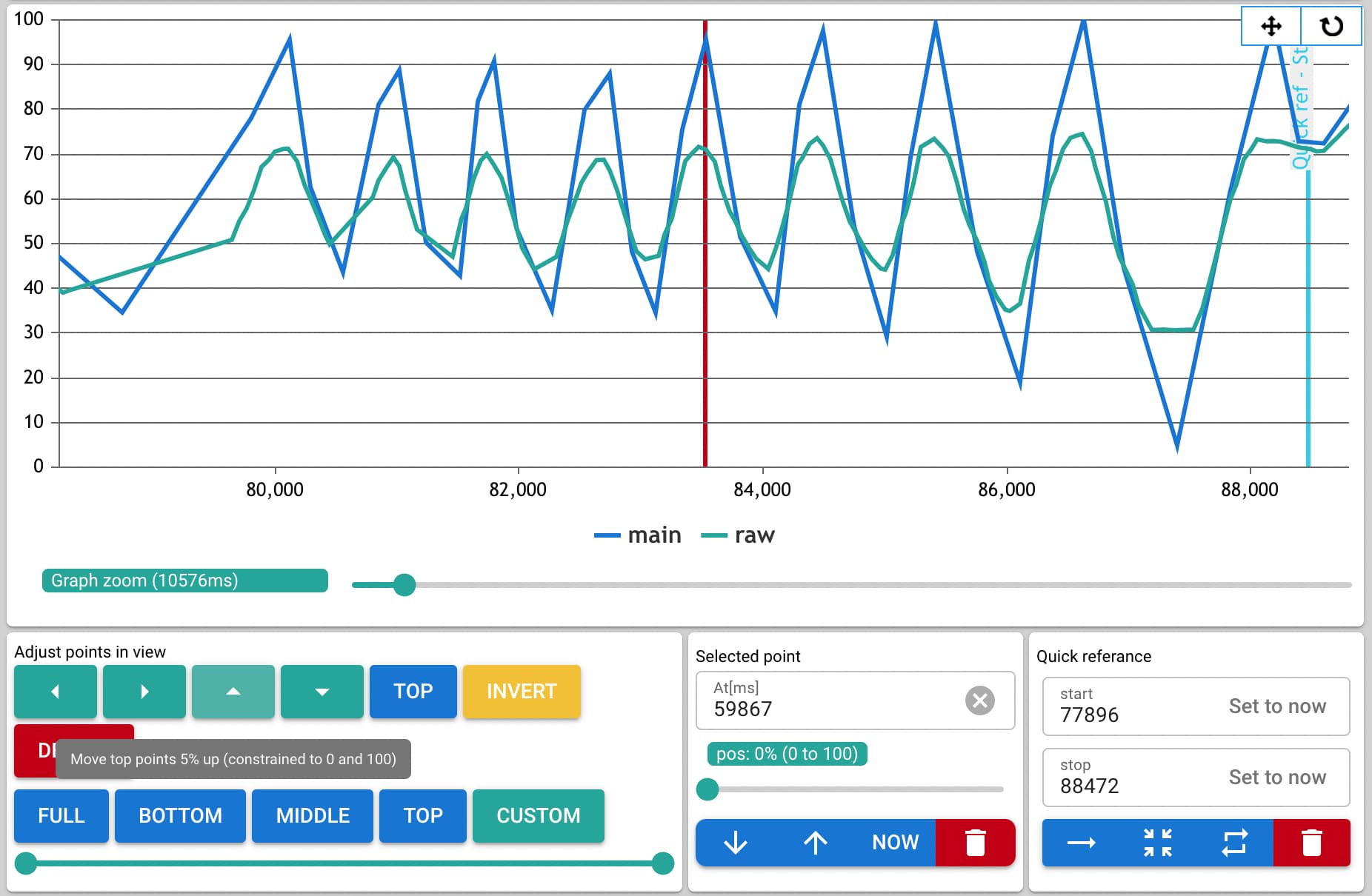

Instead, we will use the Points in view component. Hide the tracker component you used and move Points in view up to order 7 so it’s shown under the graph.

Scroll to a graph section that you want to work with using the slider above the graph (Seeker) and under it (Zoom).

Use seeker and look at how the graph matches the video action. Often, a section will look like this:

The blue graph is max 75% but looking at the vid it should be 100% (the tip). Let’s fix it. Using Points in view, we click All and instead select Top to just move the top points. We move the top points up with the little arrow up. The same can be done with the bottom points or all points in view.

Doing this, it is handy to use keyboard shortcuts j and k to set start and end points for a section you are working with. Then, click the zoom button in the quick reference component to view the section between the points:

When happy, move into another graph section and do the same. Check the vid and the graph, select a section and adjust the points.

Or, proceed to do some tweaking manually.

Tweaking frame by frame

After making use of the the motion tracker, some parts definitely have to be tweaked manually. You will probably want to delete a lot of unnecessary points to simplify the script, move some points up / down and add some points.

Some useful shortcuts:

- Left / Right - Move one frame back / forward

- N / B - Select next/previous point

- Z / X - Move selected point back / forward

- 0-9 - Insert 10%*X point at current time

- DEL - Delete selected point

- ctrl + DEL - Delete all points in view

When pleased, click File and Export your funscript.

Some general tips

- Save by exporting the script often. The web app has got some bugs and it is easy to mess up.

- Read the tips in the getting started with scripting guide

- Motion track only sections that allow for it, script the rest manually. Do not try to motion track when the movement is not visible, there are no good points to track or when movement is very subtle - you will only waste time.

- One way is to just motion track the whole vid, look at the output and delete sections that did not work well to track. Another is to get familiar with the vid and motion track the sections that allow for motion tracking.

- Remove unnecessary mid points generated by motion tracking. If there is a mid point between two points that doesn’t change trajectory for example the Handy might do a brief stop. Mid points might not be visible in the graoh so use n and b to go through the graph and clean up unnecessary points.

- Be aware of too slow or too fast movements. There are hardware limitations. The Selected Point component can be used to see if the speed of a movement is too slow or fast. The Handy has a speed limit of 400 mm/s but can handle faster commands, the stroke will just be limited.

- Don’t put too many points as the device will not be able to handle too many commands. One way is going to Settings > Div and set FPS to 10. Now when you navigate using left and right arrows you will skip 100ms. The distance between each point should not be closer than this. You can use b and n to select and delete any points that are closer.

- When the app seems broken, save your script and go to Settings > Data > Delete all data and refresh application to start over and then import your script again.